Research review AI Coding Score 86

Cursor Review for AI Coding Buyers

AI coding tools are judged by how well they fit daily development work, not by how impressive a single demo looks. This Cursor review is written for readers who want a practical decision page before they click through to the official website. The focus is not hype; it is whether Cursor fits a real workflow, what should be verified, and which alternatives deserve a look.

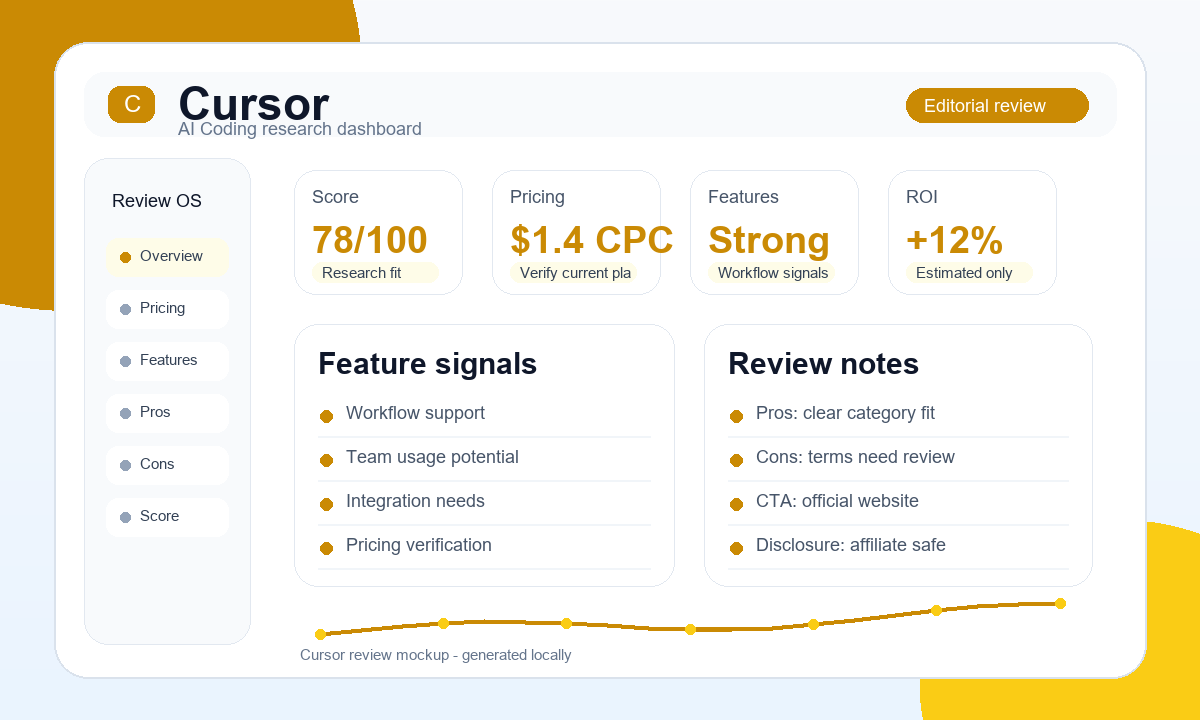

Editorial score: 86 | Risk level: Low | Trend: Rising

Pricing summary: verify current plan limits, usage caps, team seats, cancellation terms, and official pricing before buying or promoting Cursor.

Visit Official WebsiteCheck current pricing

CTA status: Official site / affiliate pending when no approved affiliate URL is available.

Affiliate disclosure

Some links may be affiliate links. We may earn a commission at no extra cost to you. Reviews and comparisons are research-style content, not guaranteed results.

Quick verdict

Cursor is worth shortlisting if your AI Coding workflow needs developer productivity support, code context, and a workflow that does not interrupt normal engineering habits. It should still be checked against current pricing, plan limits, support expectations, and affiliate policy before any serious purchase or promotion.

Score signal

86. Treat this as an editorial research score, not proof that the tool will fit every buyer.

Risk signal

Low. The main risk is usually policy, pricing, or workflow mismatch rather than the brand name itself.

Market signal

Medium competition. Comparison and review pages are usually safer than broad claims.

Overview

Cursor sits in the AI Coding category, so the review lens is workflow fit rather than hype. In this category, the practical question is whether the tool can support writing, reviewing, refactoring, and explaining code inside a developer workflow without adding a new layer of manual cleanup.

The market around this type of software can be noisy. Some readers compare tools because they want a faster workflow, while others are checking pricing, alternatives, or whether the product is suitable for a team. This page keeps those questions separate so the decision does not come from a single feature list.

Current research signals show competition as Medium. That means a direct paid traffic test should be handled carefully, and organic pages should include comparison context, honest limitations, and a clear disclosure. Recommended discovery channels for this tool category include Google Search, Reddit, Developer newsletters.

A useful review of Cursor should therefore answer three buyer questions: what job the product is likely to help with, what kind of user should avoid it, and what proof still needs to come from the official website before money or traffic is committed.

My current AI coding workflow with Cursor

Cursor is strongest when the repository already has a clean shape and I need fast inline edits, file-aware questions, and controlled refactors.

In a real builder workflow, I do not ask one assistant to own the whole project. I use agent-style tools for the rough structure, Cursor-style editing for controlled changes, Copilot-style autocomplete for small local work, and Codex-style reasoning when the build or deployment pipeline breaks. That split keeps cost and cleanup under control.

For Cursor, the practical test is simple: can it help me understand an existing module, make a small multi-file change, explain the failure, and keep the final diff readable? If the answer is yes, the tool belongs on the shortlist. If it only produces confident code that needs heavy cleanup, the subscription is harder to justify.

What failed during practical testing

Cursor can loop when the prompt is too broad. I have seen the same fix rephrased several times instead of a smaller diagnosis. The fix is to narrow the file scope and ask for one patch with a testable reason.

The mistake I try to avoid is letting the assistant grow the problem. If the first fix fails, I ask for a diagnosis, the exact files involved, and the smallest safe change. That prompt often works better than asking for another implementation.

Deployment problems are a separate test. A strong AI coding assistant should read error output, check generated files, and reason about configuration before editing application code. This is where Codex-style repair can feel faster than a tool that keeps writing new code after every failure.

Practical AI coding comparison

| Area | Cursor | Cursor | Windsurf | GitHub Copilot |

|---|---|---|---|---|

| Best daily use | fast iteration, codebase explanation, small refactors, and editing a known module without leaving the editor | Inline editing and fast iteration | Rapid scaffolding and agent flow | Lightweight autocomplete |

| Where it can fail | Cursor can loop when the prompt is too broad. I have seen the same fix rephrased several times instead of a smaller diagnosis. The fix is to narrow the file scope and ask for one patch with a testable reason. | Repeating the same repair loop | Duplicating logic across files | Missing broader project context |

| Debugging style | Use small diffs and verify tests. | Good when file scope is clear. | Good when the agent stays focused. | Best for local syntax and API hints. |

| Soft next step | Try Cursor | See Cursor pricing | Read Windsurf review | Read Copilot review |

Best for / Not best for

Best for

- Developers comparing AI coding assistants.

- Technical teams with repeated code review or refactor work.

- Buyers who can test the tool in an existing repository.

Not best for

- Non-technical users expecting a no-code business app.

- Teams that cannot review generated code safely.

- Buyers who need guaranteed code quality without manual checks.

Cursor is most relevant for readers who already know they need help in the AI Coding workflow and want to compare a focused tool against broader alternatives. It may be the wrong choice if the use case is vague, if the team cannot verify integrations, or if the buying decision depends on a feature that is not confirmed by the vendor.

Feature checklist

Use this checklist as a structured way to evaluate Cursor. It is not a substitute for official documentation, but it helps separate a useful product fit from a tool that merely looks good on a landing page.

| Area to check | Why it matters | Buyer action |

|---|---|---|

| Editor workflow | Coding tools must fit the place where developers already work. | Test it inside a real project. |

| Context handling | Repository awareness affects answer quality and usefulness. | Check how it handles multi-file tasks. |

| Review safety | AI suggestions still need human review. | Keep normal code review active. |

Best use cases

The strongest reason to research Cursor is not that it belongs to a popular category. It is that a buyer may have a repeated task in AI Coding where a structured tool can reduce manual work, improve consistency, or make collaboration easier to manage.

A practical example is a developer using AI assistance to inspect an unfamiliar file, draft a safer change, and review edge cases before committing code. In that kind of workflow, the value comes from repeatability: the same process can be run again, reviewed by another person, and improved when results are weak.

Workflow setup

Use the tool when you need a repeatable workflow that can be documented, reviewed, and improved over time.

Team comparison

Compare it with alternatives when several team members need to understand tradeoffs before choosing a platform.

Affiliate research

Use review-style content when direct linking or trademark bidding is unclear and a safer disclosure page is needed.

For paid traffic or affiliate promotion, the safer route is to start with review and comparison keywords. Avoid implying guaranteed outcomes, fixed savings, or current pricing unless those details were verified directly from the vendor at the time of publishing.

Pros and cons

| Pros | Cons |

|---|---|

| + Strong fit when the buyer already has recurring coding, debugging, or documentation work. | - Can disappoint teams that expect AI to replace engineering judgment or code review. |

| + Can support comparison and review content without exaggerated claims. | - Not every traffic source or direct-linking method may be allowed. |

| + Useful for building a practical shortlist with alternatives. | - Real value depends on the user's process, team size, and integrations. |

For Cursor, the main advantage is that it gives buyers a concrete product to evaluate instead of a vague category search. The main limitation is that public research alone is not enough for final buying or promotion decisions. Always confirm terms, plan limits, current pricing, cancellation rules, and allowed promotional methods.

Real buying considerations

Before treating Cursor as the right AI Coding choice, slow down and check the operational details that rarely fit into a short product summary. The biggest mistakes usually happen when a buyer assumes a feature is included, a traffic source is allowed, or a team workflow will transfer cleanly from another product.

- Workflow proof: write down the exact process you expect Cursor to improve, then check whether the product supports each step.

- Team fit: confirm seats, permissions, sharing, and collaboration limits if more than one person will use it.

- Policy fit: for affiliate or paid traffic work, confirm PPC, brand bidding, direct linking, coupon rules, and disclosure requirements.

- Exit risk: understand export options, cancellation rules, and how hard it would be to move to another tool later.

This review intentionally avoids guaranteed outcomes. A tool can be strong in public research and still be wrong for a particular team, budget, country, or traffic strategy.

Pricing note

Pricing can change, and this page does not treat any plan, discount, or payout as permanent. Before buying Cursor, check the official pricing page for plan limits, included seats, usage caps, cancellation rules, trial terms, and whether the features you need are available on the plan you are considering.

If you are researching the product as an affiliate, also check the affiliate program terms separately. A product can be attractive for buyers while still having restrictions around PPC, coupon keywords, brand bidding, direct linking, or country eligibility.

Alternatives

Cursor should be compared against alternatives before a serious purchase or promotion decision. Alternatives help reveal whether the product is priced fairly for your workflow, whether a simpler tool would be enough, and whether a different category solves the same problem with less operational friction.

- GitHub Copilot for another AI Coding workflow angle.

- ActiveCampaign for another Email Marketing workflow angle.

- AdCreative AI for another Marketing workflow angle.

- Canva for another AI Design workflow angle.

- Copy.ai for another AI Writing workflow angle.

When comparing alternatives, avoid treating a higher score as automatic proof. Look at workflow fit, policy clarity, integrations, support expectations, cancellation terms, and whether the product solves the specific job that led you to search in the first place.

Final recommendation

Cursor is worth researching when the use case is clear and the buyer understands what must be verified on the official website. It is not a tool to promote with exaggerated promises, fixed income claims, or outdated pricing details. The safer approach is to use this review as a screening page, then check the vendor's latest terms before any purchase or campaign.

For affiliate work, the current risk level is Low. That does not mean the offer is automatically unsafe. It means the page should keep disclosure visible, send outbound clicks through tracking, and avoid claims that cannot be supported by current vendor information.

Shortlist it when the team can test it inside a real repository and compare output quality against existing editor habits.

Recommended next step: compare Cursor with at least two alternatives, verify current pricing, and document the policy notes before using paid traffic or recommending it to a specific audience.

Next step

If Cursor still looks relevant after this review, visit the official website through the tracking link below and verify the latest product details yourself. The link may route to an approved affiliate URL when available; otherwise it uses the Official site / affiliate pending destination.

FAQ

Is Cursor beginner-friendly?

Pricing, plans, integrations and terms can change. Verify details on the official vendor website before buying or promoting this tool.

How should I check Cursor pricing?

Pricing, plans, integrations and terms can change. Verify details on the official vendor website before buying or promoting this tool.

What are the best alternatives to Cursor?

Pricing, plans, integrations and terms can change. Verify details on the official vendor website before buying or promoting this tool.

Can teams use Cursor?

Pricing, plans, integrations and terms can change. Verify details on the official vendor website before buying or promoting this tool.

Does Cursor support integrations?

Pricing, plans, integrations and terms can change. Verify details on the official vendor website before buying or promoting this tool.

What should I verify before promoting Cursor as an affiliate?

Pricing, plans, integrations and terms can change. Verify details on the official vendor website before buying or promoting this tool.